利用 iPerf 测试网路效能

测试网路速度是否为理想状况时,一般利网路芳邻、FTP传软来达成测试的目地,但如果需要重复测试时,这样的测法可能比较没有效率了,所以iperf这样的工具对于测试网路传输的速度来说就较方便。iPerf3已释出,此篇文章前半段维持iPerf2内容,增加后半段补充iPerf3的测试纪录,iPerf2/3这两个版本并不相容,但都能测试出网路效能,依照你的软体环境及测试环境选择版本,客户端与伺服端的版本必需要相同。

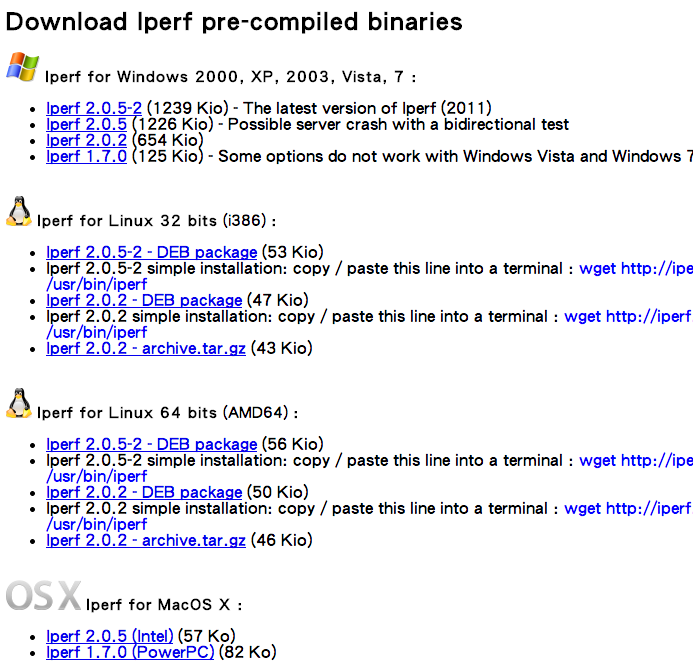

下载安装

官网中有各种版本提供使用,选择好你要使用的环境下载并解压就能使用。

示范中使用Windows环境测试,所以下载Iperf 2.0.5-2,接下来就开始看下去该怎么使用。

iperf命令说明

Usage: iperf [-s|-c host] [options]

iperf [-h|--help] [-v|--version]

Client/Server:

-f, --format [kmKM] format to report: Kbits, Mbits, KBytes, MBytes

-i, --interval # seconds between periodic bandwidth reports

-l, --len #[KM] length of buffer to read or write (default 8 KB)

-m, --print_mss print TCP maximum segment size (MTU - TCP/IP header)

-o, --output <filename> output the report or error message to this specified file

-p, --port # server port to listen on/connect to

-u, --udp use UDP rather than TCP

-w, --window #[KM] TCP window size (socket buffer size)

-B, --bind <host> bind to <host>, an interface or multicast address

-C, --compatibility for use with older versions does not sent extra msgs

-M, --mss # set TCP maximum segment size (MTU - 40 bytes)

-N, --nodelay set TCP no delay, disabling Nagle's Algorithm

-V, --IPv6Version Set the domain to IPv6

Server specific:

-s, --server run in server mode

-U, --single_udp run in single threaded UDP mode

-D, --daemon run the server as a daemon

Client specific:

-b, --bandwidth #[KM] for UDP, bandwidth to send at in bits/sec

(default 1 Mbit/sec, implies -u)

-c, --client <host> run in client mode, connecting to <host>

-d, --dualtest Do a bidirectional test simultaneously

-n, --num #[KM] number of bytes to transmit (instead of -t)

-r, --tradeoff Do a bidirectional test individually

-t, --time # time in seconds to transmit for (default 10 secs)

-F, --fileinput <name> input the data to be transmitted from a file

-I, --stdin input the data to be transmitted from stdin

-L, --listenport # port to receive bidirectional tests back on

-P, --parallel # number of parallel client threads to run

-T, --ttl # time-to-live, for multicast (default 1)

-Z, --linux-congestion <algo> set TCP congestion control algorithm (Linux only)

Miscellaneous:

-x, --reportexclude [CDMSV] exclude C(connection) D(data) M(multicast) S(settings) V(server) reports

-y, --reportstyle C report as a Comma-Separated Values

-h, --help print this message and quit

-v, --version print version information and quit

[KM] Indicates options that support a K or M suffix for kilo- or mega-

The TCP window size option can be set by the environment variable

TCP_WINDOW_SIZE. Most other options can be set by an environment variable

IPERF_<long option name>, such as IPERF_BANDWIDTH.

将上面的整理一下,将Server、Client共通归类一起做说明,其他的再分开解释。

参数说明 -s 启动server模式,例如:iperf -s -c 启动client模式,命令后接server的位址,例如:iperf -c 192.168.1.3 通用参数 -f [k|m|K|M] 报告结果显示的单位,以Kbits, Mbits, KBytes, MBytes,例如:iperf -c 192.168.1.3 -f K -i sec 报告显示的时间间隔(以秒为单位),例如:iperf -c 192.168.1.3 -i 2 -l [KM] 缓冲区大小,预设是8KB,例如:iperf -c 192.168.1.3 -l 16 -m 显示MTU最大值 -o 将报告与错误信息输出到档案,例如:iperf -c 192.168.1.3 -o c:\iperf-log.txt -p server使用的连接埠或client使用的连接埠,两端连接埠要一致,例如:iperf -s -p 9999 -u 使用udp通讯规格 -w 指定TCP框架大小,预设是8KB -B 绑定一个主机地址,可以是介面或是广播位址,当主机端同时有很多位址时才需要绑定 -C 相容旧版本(两端版本不致时使用) -M 设定TCP封包的最大MTU值 -N 设定TCP不延时 -V 传输ipv6资料封包 server专用 -D 背景服务方式运行,例如:iperf -s -D -U 使用单一执行绪使用UDp模式 client专用 -b UDP测试专用,可以设定每秒传送的速度 -d 同时进行双向传输测试 -n 指定传输的大小,例如: iperf -c 192.168.1.3 -n 100000 -r 单独进行双向传输测试 -t 测试时间长度,预设10秒,例如: iperf -c 192.168.1.3 -t 5 -F 使用指定档案来传输 -I 使用stdin方式当做传输内容 -T 指定ttl值

开始测试

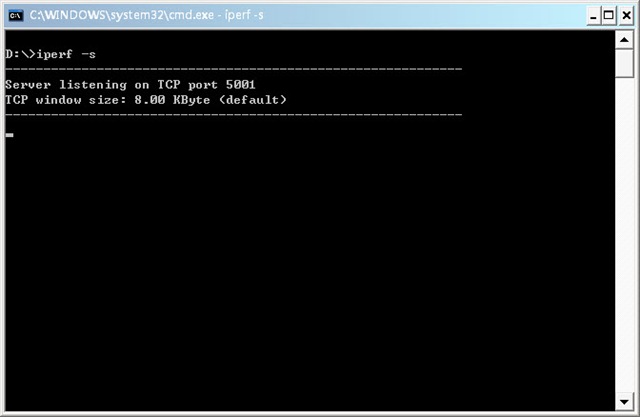

使用iperf需要要有2台电脑,一台为serve端,另一台为client端,测试环境使用windows环境与Giga网路来当做示范,分为Server与Client。

Server(伺服端):

Server端的命令预设非常的简单,只要执行

iperf –s

如下图就开始运作。

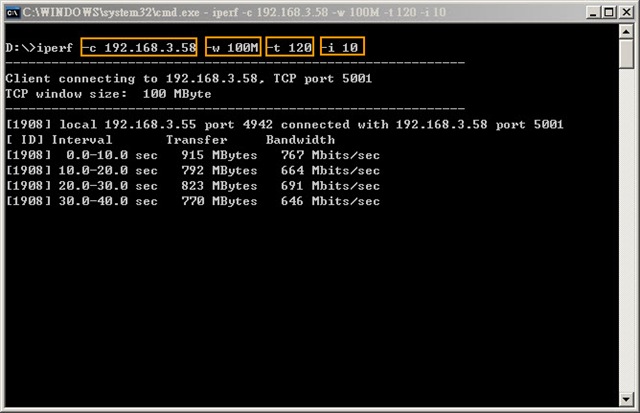

Client(客户端):

Client端设定比较多,主要是看你要选择怎么样的测试方式,示范中使用下面的命令:

iperf –c 192.168.3.58 –w 100M –t 120 –i 10

解释一下:

-c 192.168.3.58 :Server端的IP -w 100M :测试的档案大小 -t 120:监视测量数据时间为120秒 -i 10:每隔10秒将数据显示出来

$

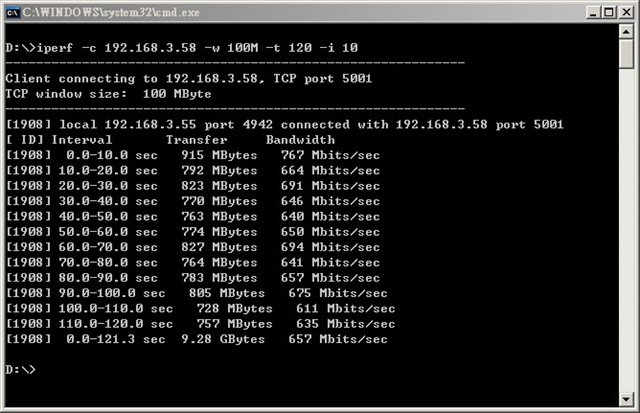

测试结果

依照所执行的命令需要测约120秒左右时间,看到下图结果报告显示总共测试从0.0~121.3秒,传输9.28GByte,

平均速度(Bandwidth)约在657Mbits/sec之间,对于Giga环境已经发挥6成的速度,这在Giga环境下测试的值并不是理想值,测试时必需要关闭一些网路软体或是内建的QoS机制才有可能发挥到极速,所以速度并没有我第一次在测试时平均可以跑到800Mbits/sec。

iPerf3

iPerf约莫许久后终于发行3.0版本,通称为iPerf3,其旧的2.0版本称iPerf2,如你要使用iPerf3新版本测试网路效能时,客户端与伺服器端版本必需要都是iPerf3才能完成测试,这点官网有提到iPerf3 is not backwards compatible with iPerf2.,其他参数可以依照前半段文章不需要做变更就能继续测试。

下载iPerf3

iPerf目前支援更多平台,前往官网下载页面下载,后面测试的版本使用iPerf 3.1.3

iPerf3 开始测试

下面举出使用iPerf3进行客户端与伺服器配测试的纪录档供参考。

客户端

客户端使用Windows,而伺服器端使用Arch Linux

D:\iperf-3.1.3-win64>iperf3 -c 192.168.16.200 -w 100M -t 120 -i 10 Connecting to host 192.168.16.200, port 5201 [ 4] local 192.168.16.223 port 58573 connected to 192.168.16.200 port 5201 [ ID] Interval Transfer Bandwidth [ 4] 0.00-10.00 sec 1.20 GBytes 1.03 Gbits/sec [ 4] 10.00-20.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 20.00-30.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 30.00-40.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 40.00-50.00 sec 1.10 GBytes 948 Mbits/sec [ 4] 50.00-60.00 sec 1.10 GBytes 948 Mbits/sec [ 4] 60.00-70.00 sec 1.10 GBytes 945 Mbits/sec [ 4] 70.00-80.00 sec 1.10 GBytes 946 Mbits/sec [ 4] 80.00-90.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 90.00-100.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 100.00-110.00 sec 1.10 GBytes 947 Mbits/sec [ 4] 110.00-120.00 sec 1.10 GBytes 947 Mbits/sec - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth [ 4] 0.00-120.00 sec 13.3 GBytes 954 Mbits/sec sender [ 4] 0.00-120.00 sec 13.2 GBytes 947 Mbits/sec receiver iperf Done.

伺服器端

因刚好没有2台Windows供测试,伺服器端使用Arch Linux

[danny@lab-p5e-vm ~]$ iperf3 -s --------------------------------------------------[danny@lab-p5e-vm ~]--------- Server listening on 5201 ----------------------------------------------------------- Accepted connection from 192.168.16.223, port 58572 [ 5] local 192.168.16.200 port 5201 connected to 192.168.16.223 port 58573 [ ID] Interval Transfer Bandwidth [ 5] 0.00-1.00 sec 108 MBytes 903 Mbits/sec [ 5] 1.00-2.00 sec 113 MBytes 948 Mbits/sec [ 5] 2.00-3.00 sec 113 MBytes 948 Mbits/sec [ 5] 3.00-4.00 sec 113 MBytes 948 Mbits/sec [ 5] 4.00-5.00 sec 113 MBytes 947 Mbits/sec [ 5] 5.00-6.00 sec 113 MBytes 948 Mbits/sec [ 5] 6.00-7.00 sec 113 MBytes 949 Mbits/sec [ 5] 7.00-8.00 sec 113 MBytes 945 Mbits/sec [ 5] 8.00-9.00 sec 113 MBytes 944 Mbits/sec [ 5] 9.00-10.00 sec 113 MBytes 949 Mbits/sec [ 5] 10.00-11.00 sec 113 MBytes 947 Mbits/sec [ 5] 11.00-12.00 sec 113 MBytes 949 Mbits/sec [ 5] 12.00-13.00 sec 113 MBytes 946 Mbits/sec [ 5] 13.00-14.00 sec 113 MBytes 949 Mbits/sec [ 5] 14.00-15.00 sec 113 MBytes 949 Mbits/sec [ 5] 15.00-16.00 sec 113 MBytes 947 Mbits/sec [ 5] 16.00-17.00 sec 112 MBytes 941 Mbits/sec [ 5] 17.00-18.00 sec 113 MBytes 949 Mbits/sec [ 5] 18.00-19.00 sec 113 MBytes 944 Mbits/sec [ 5] 19.00-20.00 sec 113 MBytes 949 Mbits/sec [ 5] 20.00-21.00 sec 113 MBytes 947 Mbits/sec [ 5] 21.00-22.00 sec 113 MBytes 948 Mbits/sec [ 5] 22.00-23.00 sec 113 MBytes 949 Mbits/sec [ 5] 23.00-24.00 sec 113 MBytes 947 Mbits/sec [ 5] 24.00-25.00 sec 113 MBytes 946 Mbits/sec [ 5] 25.00-26.00 sec 113 MBytes 949 Mbits/sec [ 5] 26.00-27.00 sec 113 MBytes 948 Mbits/sec [ 5] 27.00-28.00 sec 113 MBytes 947 Mbits/sec [ 5] 28.00-29.00 sec 113 MBytes 949 Mbits/sec [ 5] 29.00-30.00 sec 111 MBytes 933 Mbits/sec [ 5] 30.00-31.00 sec 113 MBytes 949 Mbits/sec [ 5] 31.00-32.00 sec 113 MBytes 947 Mbits/sec [ 5] 32.00-33.00 sec 113 MBytes 949 Mbits/sec [ 5] 33.00-34.00 sec 113 MBytes 948 Mbits/sec [ 5] 34.00-35.00 sec 113 MBytes 944 Mbits/sec [ 5] 35.00-36.00 sec 113 MBytes 946 Mbits/sec [ 5] 36.00-37.00 sec 113 MBytes 948 Mbits/sec [ 5] 37.00-38.00 sec 113 MBytes 944 Mbits/sec [ 5] 38.00-39.00 sec 113 MBytes 949 Mbits/sec [ 5] 39.00-40.00 sec 113 MBytes 949 Mbits/sec [ 5] 40.00-41.00 sec 113 MBytes 947 Mbits/sec [ 5] 41.00-42.00 sec 113 MBytes 949 Mbits/sec [ 5] 42.00-43.00 sec 113 MBytes 948 Mbits/sec [ 5] 43.00-44.00 sec 113 MBytes 948 Mbits/sec [ 5] 44.00-45.00 sec 113 MBytes 947 Mbits/sec [ 5] 45.00-46.00 sec 113 MBytes 949 Mbits/sec [ 5] 46.00-47.00 sec 113 MBytes 949 Mbits/sec [ 5] 47.00-48.00 sec 113 MBytes 949 Mbits/sec [ 5] 48.00-49.00 sec 113 MBytes 947 Mbits/sec [ 5] 49.00-50.00 sec 113 MBytes 945 Mbits/sec [ 5] 50.00-51.00 sec 113 MBytes 949 Mbits/sec [ 5] 51.00-52.00 sec 113 MBytes 948 Mbits/sec [ 5] 52.00-53.00 sec 113 MBytes 948 Mbits/sec [ 5] 53.00-54.00 sec 113 MBytes 950 Mbits/sec [ 5] 54.00-55.00 sec 113 MBytes 947 Mbits/sec [ 5] 55.00-56.00 sec 113 MBytes 948 Mbits/sec [ 5] 56.00-57.00 sec 113 MBytes 948 Mbits/sec [ 5] 57.00-58.00 sec 113 MBytes 949 Mbits/sec [ 5] 58.00-59.00 sec 113 MBytes 948 Mbits/sec [ 5] 59.00-60.00 sec 113 MBytes 947 Mbits/sec [ 5] 60.00-61.00 sec 111 MBytes 931 Mbits/sec [ 5] 61.00-62.00 sec 113 MBytes 946 Mbits/sec [ 5] 62.00-63.00 sec 112 MBytes 940 Mbits/sec [ 5] 63.00-64.00 sec 113 MBytes 949 Mbits/sec [ 5] 64.00-65.00 sec 113 MBytes 947 Mbits/sec [ 5] 65.00-66.00 sec 113 MBytes 944 Mbits/sec [ 5] 66.00-67.00 sec 113 MBytes 949 Mbits/sec [ 5] 67.00-68.00 sec 113 MBytes 948 Mbits/sec [ 5] 68.00-69.00 sec 113 MBytes 948 Mbits/sec [ 5] 69.00-70.00 sec 113 MBytes 948 Mbits/sec [ 5] 70.00-71.00 sec 112 MBytes 942 Mbits/sec [ 5] 71.00-72.00 sec 113 MBytes 947 Mbits/sec [ 5] 72.00-73.00 sec 113 MBytes 949 Mbits/sec [ 5] 73.00-74.00 sec 113 MBytes 948 Mbits/sec [ 5] 74.00-75.00 sec 113 MBytes 949 Mbits/sec [ 5] 75.00-76.00 sec 113 MBytes 948 Mbits/sec [ 5] 76.00-77.00 sec 113 MBytes 948 Mbits/sec [ 5] 77.00-78.00 sec 113 MBytes 945 Mbits/sec [ 5] 78.00-79.00 sec 113 MBytes 948 Mbits/sec [ 5] 79.00-80.00 sec 112 MBytes 937 Mbits/sec [ 5] 80.00-81.00 sec 113 MBytes 949 Mbits/sec [ 5] 81.00-82.00 sec 113 MBytes 949 Mbits/sec [ 5] 82.00-83.00 sec 112 MBytes 941 Mbits/sec [ 5] 83.00-84.00 sec 113 MBytes 948 Mbits/sec [ 5] 84.00-85.00 sec 113 MBytes 946 Mbits/sec [ 5] 85.00-86.00 sec 113 MBytes 948 Mbits/sec [ 5] 86.00-87.00 sec 113 MBytes 948 Mbits/sec [ 5] 87.00-88.00 sec 113 MBytes 949 Mbits/sec [ 5] 88.00-89.00 sec 113 MBytes 948 Mbits/sec [ 5] 89.00-90.00 sec 113 MBytes 949 Mbits/sec [ 5] 90.00-91.00 sec 113 MBytes 949 Mbits/sec [ 5] 91.00-92.00 sec 113 MBytes 948 Mbits/sec [ 5] 92.00-93.00 sec 113 MBytes 949 Mbits/sec [ 5] 93.00-94.00 sec 113 MBytes 949 Mbits/sec [ 5] 94.00-95.00 sec 113 MBytes 945 Mbits/sec [ 5] 95.00-96.00 sec 113 MBytes 949 Mbits/sec [ 5] 96.00-97.00 sec 112 MBytes 943 Mbits/sec [ 5] 97.00-98.00 sec 113 MBytes 945 Mbits/sec [ 5] 98.00-99.00 sec 113 MBytes 947 Mbits/sec [ 5] 99.00-100.00 sec 113 MBytes 948 Mbits/sec [ 5] 100.00-101.00 sec 113 MBytes 948 Mbits/sec [ 5] 101.00-102.00 sec 113 MBytes 948 Mbits/sec [ 5] 102.00-103.00 sec 113 MBytes 945 Mbits/sec [ 5] 103.00-104.00 sec 113 MBytes 947 Mbits/sec [ 5] 104.00-105.00 sec 113 MBytes 944 Mbits/sec [ 5] 105.00-106.00 sec 113 MBytes 948 Mbits/sec [ 5] 106.00-107.00 sec 113 MBytes 948 Mbits/sec [ 5] 107.00-108.00 sec 113 MBytes 947 Mbits/sec [ 5] 108.00-109.00 sec 113 MBytes 947 Mbits/sec [ 5] 109.00-110.00 sec 113 MBytes 946 Mbits/sec [ 5] 110.00-111.00 sec 113 MBytes 945 Mbits/sec [ 5] 111.00-112.00 sec 113 MBytes 949 Mbits/sec [ 5] 112.00-113.00 sec 113 MBytes 949 Mbits/sec [ 5] 113.00-114.00 sec 113 MBytes 948 Mbits/sec [ 5] 114.00-115.00 sec 113 MBytes 947 Mbits/sec [ 5] 115.00-116.00 sec 112 MBytes 940 Mbits/sec [ 5] 116.00-117.00 sec 113 MBytes 947 Mbits/sec [ 5] 117.00-118.00 sec 113 MBytes 949 Mbits/sec [ 5] 118.00-119.00 sec 113 MBytes 949 Mbits/sec [ 5] 119.00-120.00 sec 113 MBytes 945 Mbits/sec [ 5] 120.00-120.03 sec 3.39 MBytes 945 Mbits/sec - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth [ 5] 0.00-120.03 sec 0.00 Bytes 0.00 bits/sec sender [ 5] 0.00-120.03 sec 13.2 GBytes 947 Mbits/sec receiver ----------------------------------------------------------- Server listening on 5201 -----------------------------------------------------------

结语

从旧文章测试撷图与新文章测试结果也能看到效能上的差异,其中旧文章使用的是介面卡的网路卡,而新增加的是使用内建的网路卡,使用的Switch Hub是一样的,效能上面能看到一些差距。

参考资料

更新日志

| 日期 | 内容 |

|---|---|

| 2017/07/24 | 增加iPerf 3 |

| 2014/03/30 | 初版 |

关键字

- 利用 iperf 测试网路效能

- iPerf 测试网路速度

- 网路速度测试

- iperf iPerf

- iperf linux winodws

- iperf3 iperf3 iPerf3 iPerf2